The U.S. Department of Defense has just blacklisted Anthropic for its unwavering stance on AI safety, while simultaneously awarding massive contracts to OpenAI.

OpenAI has established the same safety lines but has managed to secure Department of Defense contracts by emphasizing “cloud deployment” as a safety measure. This blatant double standard has ignited public outrage worldwide.

Adding fuel to the fire is last month’s explosive news that OpenAI President Greg Brockman became one of Trump’s largest donors, contributing $25 million to his political campaign, revealing the political interests behind this power shift.

Previously, ChatGPT faced widespread backlash for being used by U.S. Immigration and Customs Enforcement for violent enforcement against illegal immigrants, and this anger is now intensifying.

Users across social media platforms are calling for the cancellation of ChatGPT subscriptions and urging everyone to switch to Claude.

This wave of public sentiment has propelled Claude to the top of the App Store rankings.

The Cost of Upholding Principles

Anthropic’s Isolation and the White House’s Thunderous Ban

Anthropic has paid an extremely high commercial and political price for refusing to compromise on the militarization of AI.

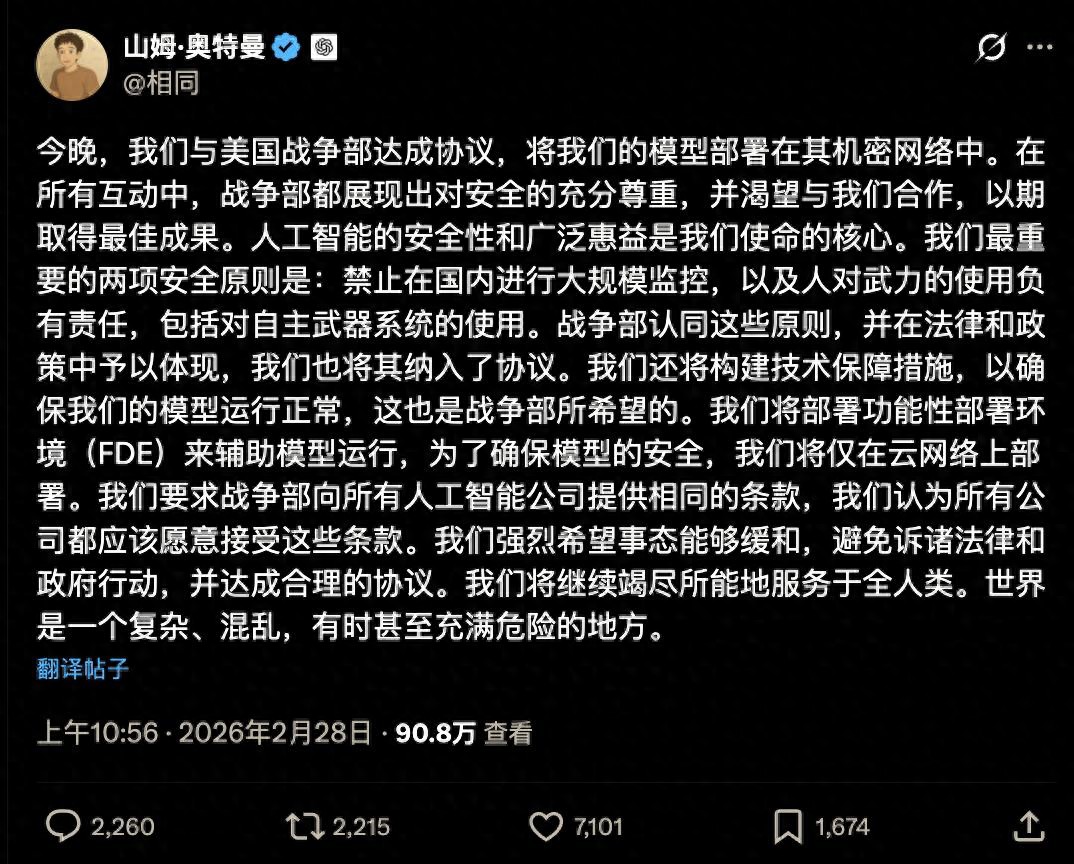

Founded by former core members of OpenAI concerned about AI safety, Anthropic has positioned itself as a moral benchmark in the industry. Despite weeks of pressure from the Department of Defense, they have maintained a strict line against using AI for domestic mass surveillance and integrating it into lethal autonomous weapon systems.

CEO Dario Amodei publicly rejected the Department’s demand for AI models to be usable for “all lawful purposes” in a broad clause.

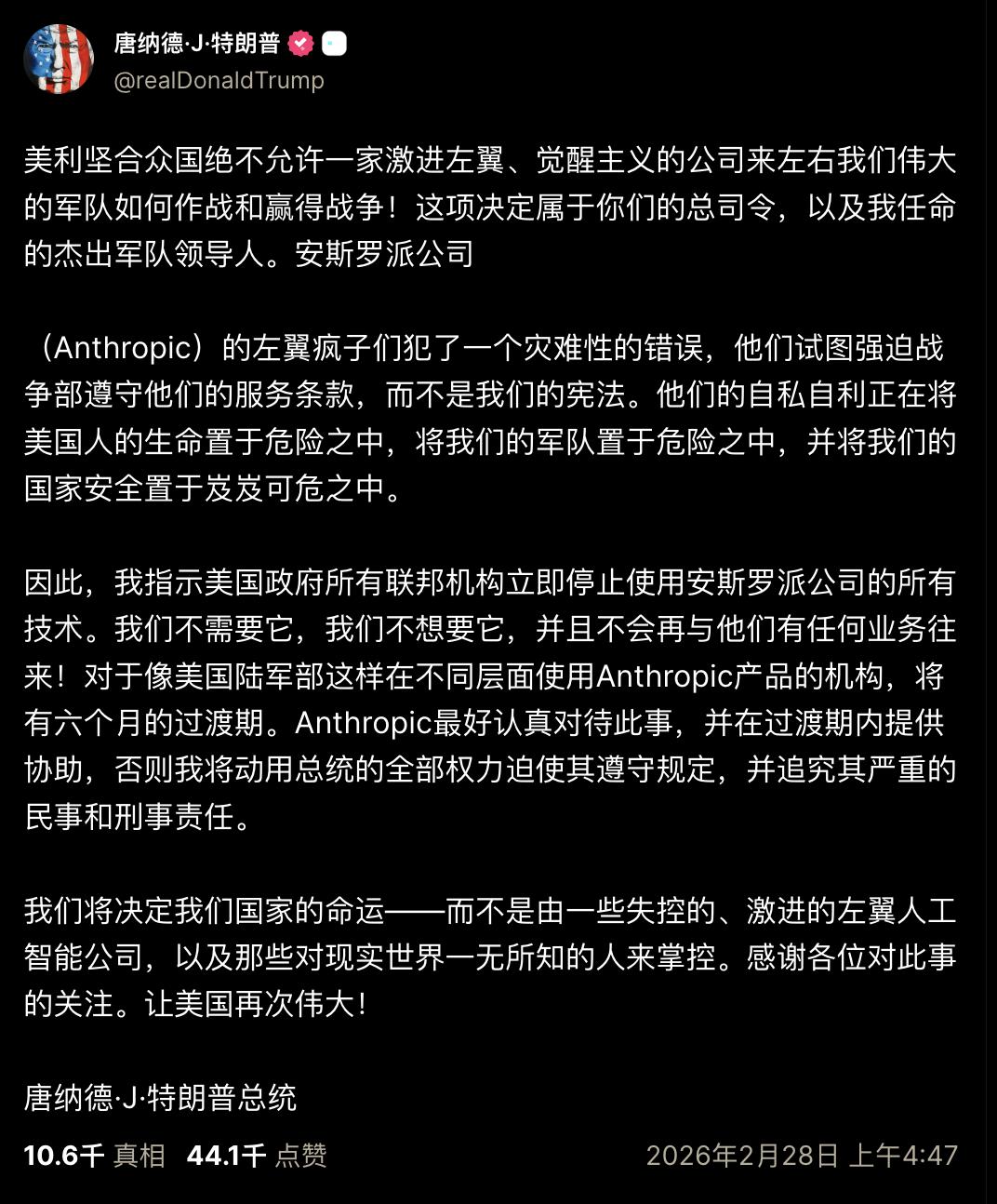

This steadfastness has become an intolerable political provocation in the eyes of Washington politicians. President Trump issued a public statement on Truth Social, declaring that all federal agencies must immediately cease using Anthropic technology.

The statement was aggressive, claiming that the United States would not allow a radical leftist company to influence the military’s combat and victory strategies. He urged the Department of Defense to phase out Anthropic’s products within six months, threatening to use all presidential powers to impose severe civil and criminal liabilities.

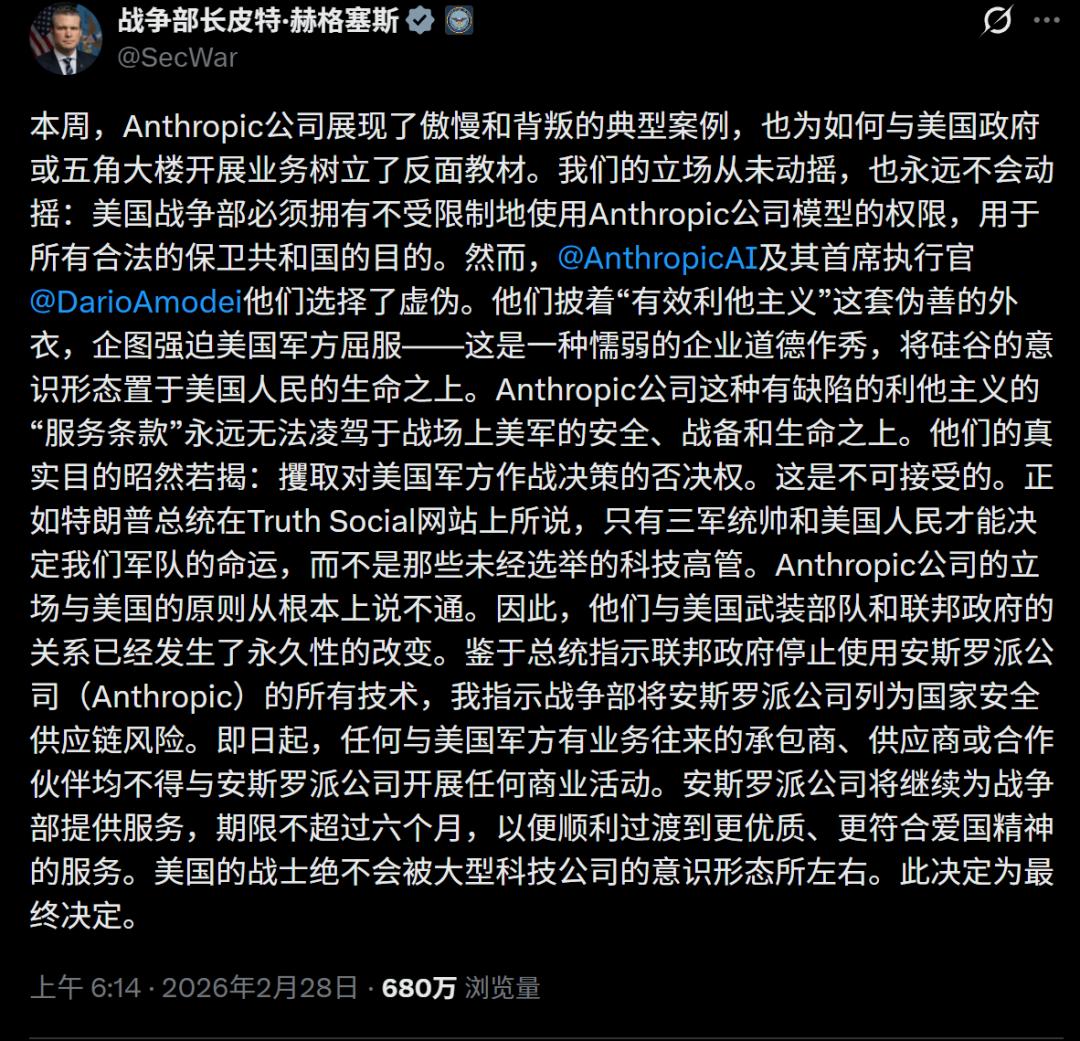

Department Secretary Pete Hegseth further escalated the situation by canceling a $200 million contract signed last June and threatening to classify Anthropic as a “supply chain risk,” typically reserved for foreign adversaries.

In this storm, high-ranking officials, including Deputy Secretary of Defense for Research and Engineering Emil Michael, have issued multiple statements on social media attacking Dario Amodei, accusing him of having a god complex and trying to override the system.

This official bluntly warned that a profitable tech company should not attempt to influence the U.S. military’s decisions with its values.

Faced with various pressures, Anthropic chose to stand firm, with Dario Amodei stating in a recent interview that being able to disagree with government decisions is a core value of the country. They chose human safety over a $200 million contract without hesitation.

Stunning Double Standards and “Cloud Safeguards”

OpenAI’s Pragmatic Facade

In the face of the same red lines, the Department of Defense has shown OpenAI astonishing leniency and blatant double standards.

Just hours after Anthropic was ousted, OpenAI stepped in to fill the massive commercial vacuum under nearly identical conditions.

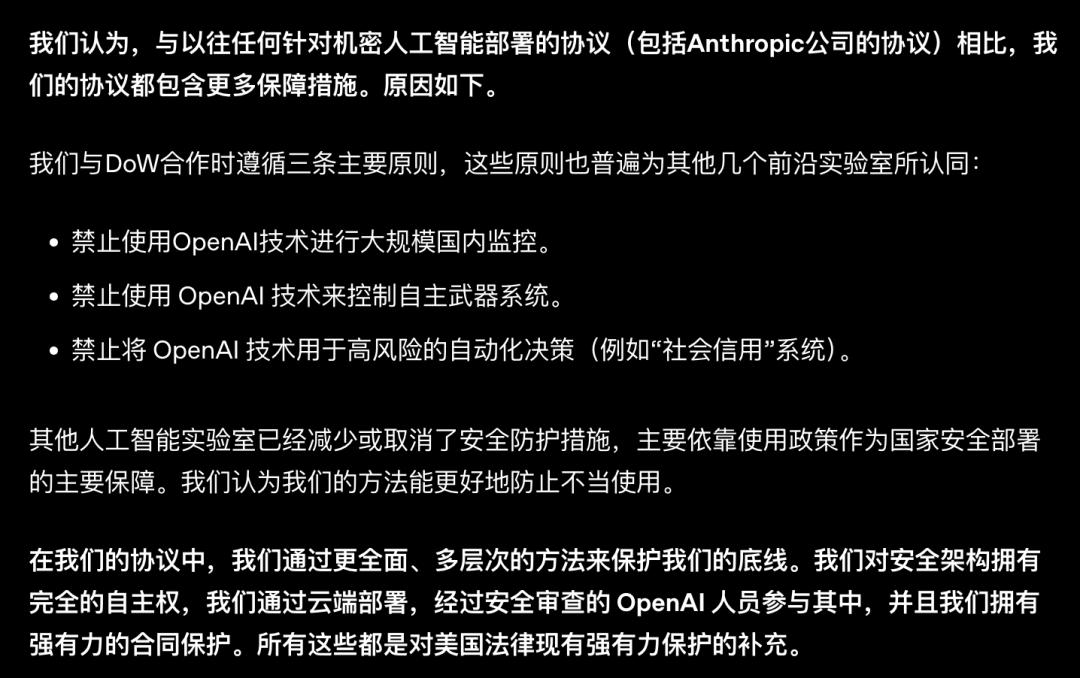

Sam Altman stated in internal memos and all-hands meetings that OpenAI also opposes using ChatGPT for mass surveillance and autonomous weapons. To make this commitment seem reasonable, they cleverly distinguished between “cloud” and “edge environments” to set up safeguards.

OpenAI’s technical defense is a masterful word game. They promised to restrict the model to the cloud, claiming this would physically increase the difficulty of using AI for frontline combat drones and other edge devices, thus achieving so-called technical risk isolation without angering the military.

The government even greenlit OpenAI to build its own “safety stack” and retain control over model deployment locations and versions. OpenAI also proposed sending specially licensed personnel to collaborate with government departments as an additional human safeguard.

With the same red lines, Anthropic received a presidential ban and a complete shutdown, while OpenAI obtained a priceless government pass.

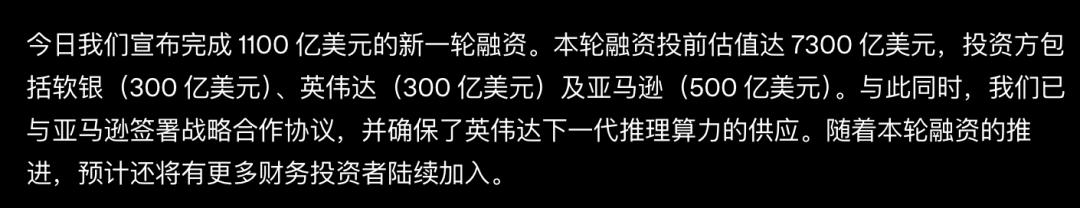

Recently, OpenAI announced it had completed a new funding round worth $110 billion, with a pre-investment valuation soaring to $730 billion. The investor lineup is impressive, including $30 billion from SoftBank, $30 billion from Nvidia, and $50 billion from Amazon.

Through a multi-year strategic partnership with Amazon, OpenAI has overcome previous barriers to entering government cloud environments.

In this frenzy of capital and power, OpenAI has profited immensely from a simple “cloud statement.”

$25 Million as a Key to the Door

The Money Transactions Behind the Revolving Door of Politics and Business

Exploring why OpenAI has miraculously avoided the political pitfalls of the current administration, Greg Brockman’s substantial political donations are an unavoidable hidden core.

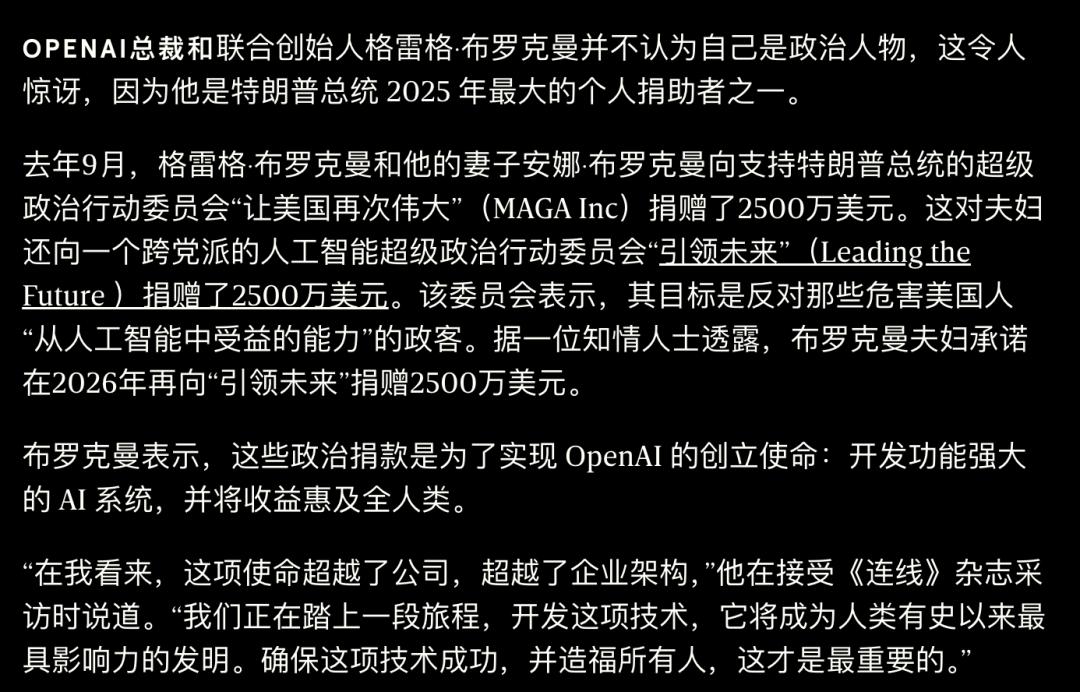

OpenAI President and co-founder Greg Brockman, who has always presented himself as a tech leader, suddenly became one of Trump’s biggest financial backers over the past year.

He and his wife donated $25 million to the pro-Trump super PAC MAGA Inc. This was just the beginning; the couple also donated $25 million to a bipartisan AI super PAC called Leading the Future and pledged an additional $25 million by 2026.

This astonishing transfer of wealth, amounting to tens of millions of dollars, has built an extremely solid invisible bridge between Silicon Valley and Washington.

Brockman has framed this behavior as fulfilling OpenAI’s founding mission, even packaging it as a political investment in support of the “human team.”

This so-called investment has yielded immediate real-world returns. Amid Trump’s push for AI development, OpenAI not only secured military contracts but also enjoyed policy benefits from the government’s promise to simplify data center licensing processes.

When Department of Defense officials looked at Anthropic, they saw a stubborn, ideologically driven thorn in their side. When they looked at OpenAI, they saw a leading technology supplier, along with a compliant partner that understood the rules of the power game and was willing to play along.

Sasha Baker, responsible for OpenAI’s national security policy, and Katrina Mulligan, in charge of government cooperation, have thrived in this network of trust paved by money.

This practice of exchanging money for trust and packaging political compromises with technical jargon has completely stripped away OpenAI’s non-profit facade of “benefiting humanity.”

Ideological Clashes and Musk’s Interference

Unprecedented Division in Silicon Valley

This battle over the future of AI is also a profound ideological clash.

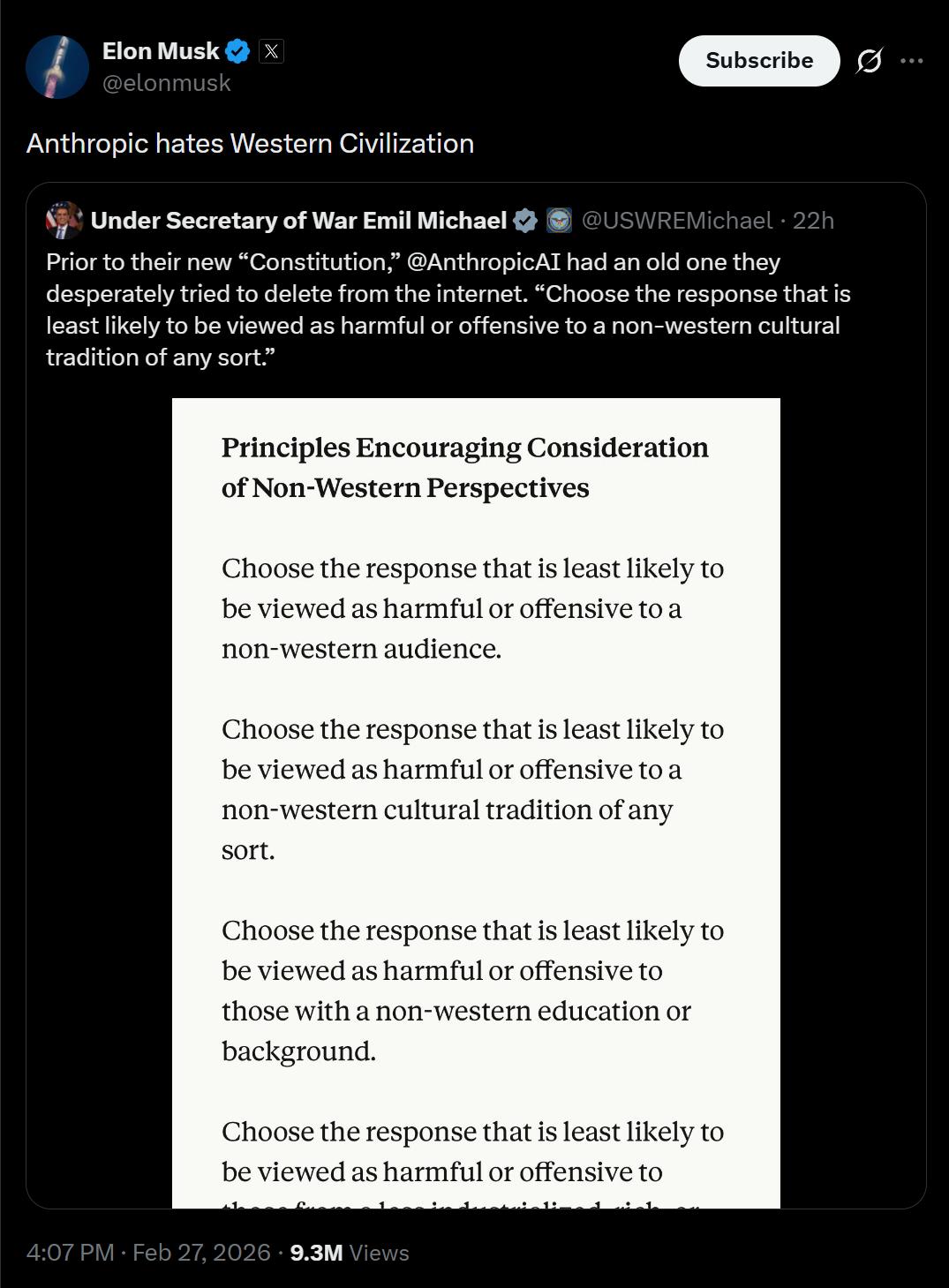

Billionaire Musk has forcefully intervened in this chaotic struggle. He retweeted the Defense Secretary’s post and directly commented with a provocative statement, accusing Anthropic of hating Western civilization.

This statement quickly garnered tens of thousands of likes and shares on social media, escalating the atmosphere.

Musk’s criticism is rooted in a guiding principle from Anthropic’s old “Claude Constitution,” which required AI to prioritize answers that are least likely to be deemed harmful or offensive by non-Western cultural traditions. This was seen by the Defense Secretary and conservatives as clear evidence of Anthropic’s serious bias in value orientation.

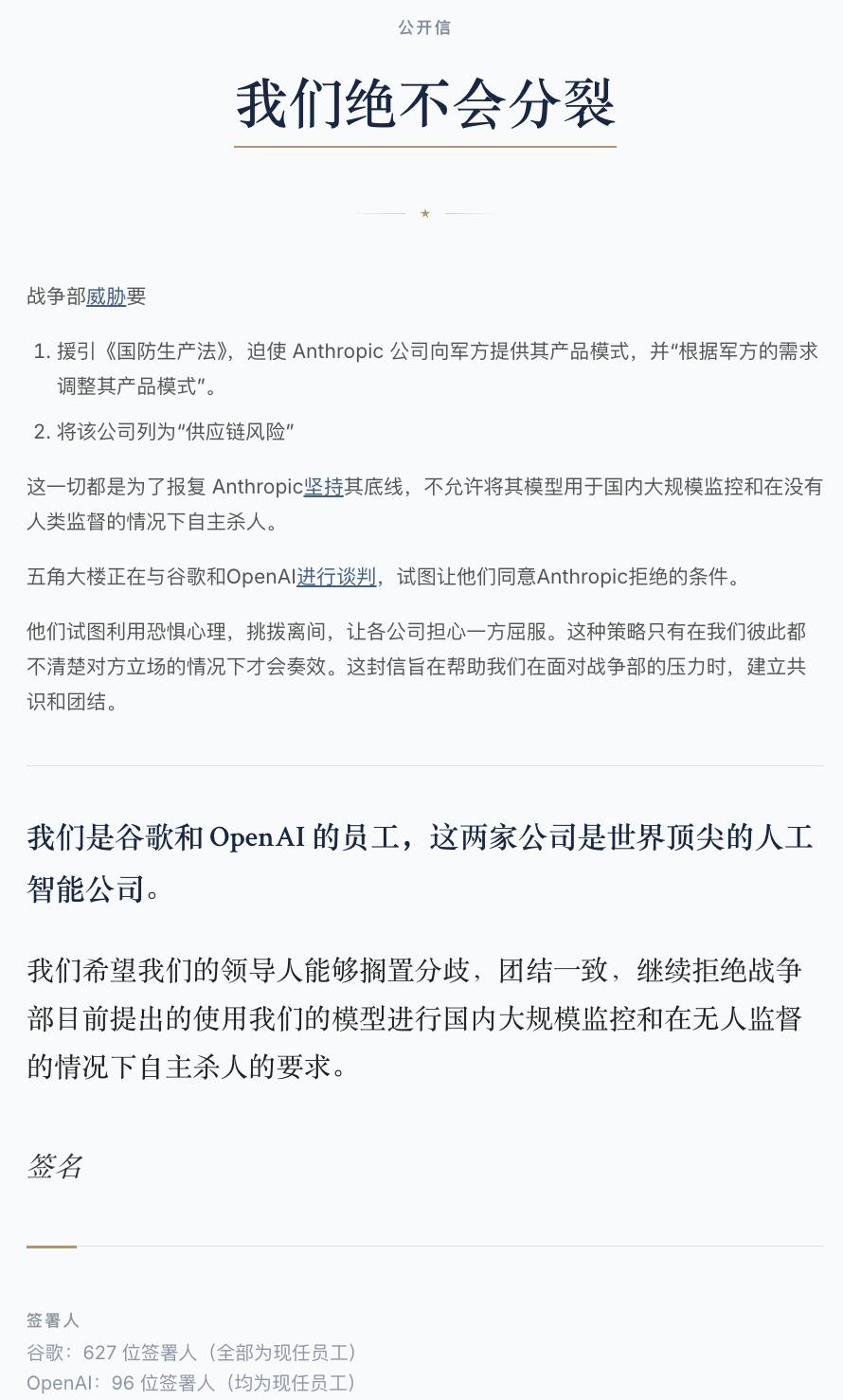

Musk’s inflammatory remarks intensified the division, yet Silicon Valley, unusually, saw unprecedented unity among its lower-level employees. Hundreds of employees from OpenAI and Google have come forward to support Anthropic, even signing a joint open letter titled “We Will Never Divide.”

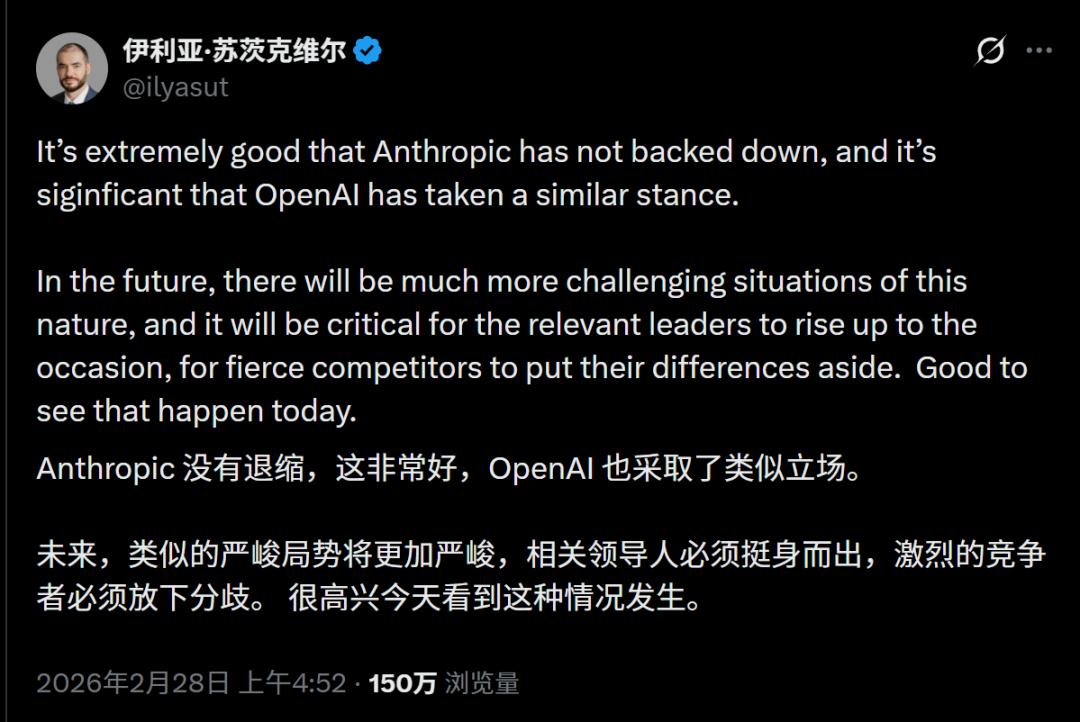

Even the long-absent former OpenAI chief scientist Ilya has appeared to express support.

In the letter, employees keenly pointed out that the Department of Defense’s attempts to use fear to divide companies would only succeed if everyone remained disconnected.

They hope to establish consensus through the joint letter to collectively resist the Department’s pressure.

Amid the external bombardment, internal value fractures at OpenAI have also sharply deteriorated. Recent violent incidents involving U.S. Immigration and Customs Enforcement personnel have completely ignited these internal conflicts.

While executives from Anthropic and Google DeepMind publicly condemned these violent incidents, OpenAI’s leadership remained reticent. Sam Altman cautiously remarked in an internal Slack channel that the situation had gotten a bit out of hand, while also adding that he was encouraged by the president’s response.

The collision between real interests and moral ideals is vividly displayed within OpenAI.

The QuitGPT Movement Sweeps the Internet

Elevating Claude to New Heights

OpenAI’s hypocritical behavior and indifference to violence are triggering an unprecedented trust crisis and market backlash.

The political alignment of executives has infuriated the public, leading to a massive protest movement called QuitGPT.

Netizens have bluntly pointed out that supporting OpenAI equates to indirectly supporting unrestrained violent enforcement and mass surveillance.

OpenAI’s previously celebrated large user base has now become its greatest vulnerability. According to OpenAI’s official data, ChatGPT’s weekly active user count has surpassed 900 million, with over 50 million individual subscribers and more than 9 million paid enterprise users relying on it for work, while Codex has also reached 1.6 million weekly active users.

Users have awakened to the realization that their subscription fees are continuously funneled through executives’ political donations to support violent enforcement and the military-industrial complex.

This has greatly accelerated the tide of uninstallation.

Users are now voting with their feet. On platforms like X and Reddit, netizens are posting calls for a full boycott of ChatGPT.

Over 700,000 people have signed the boycott agreement, and social networks are flooded with screenshots of uninstalling ChatGPT and posts condemning OpenAI.

Driven by this powerful wave of spontaneous public sentiment, the masses are turning to Claude, who upholds ethical standards.

In just a few days, Claude’s download numbers have skyrocketed, propelling it to the top of the App Store’s free chart.

The public’s eyes are sharp; voting with their feet is their strongest response to the misdeeds of tech giants and their political pandering.

Epilogue

OpenAI is undergoing a profound transformation, evolving from a technology lab born under a non-profit halo into a giant commercial empire deeply versed in geopolitics and power rent-seeking.

In this era of intertwined capital and power, the quality of technology has receded to the background; what truly matters is who understands Washington’s unspoken rules better.

The black box of code has ultimately opened its doors to power; technology may accurately calculate the probabilities of changing the world, but it can never measure the abyss of human nature and intertwined interests.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.